Aims and background

The aim has been to explore action-sound couplings in human-computer interaction based on the conviction that there are many close links between sound and body motion in music and that these links should be studied and exploited in various new music-making and everyday contexts.

Results

The results of the project can be summarised as follows:

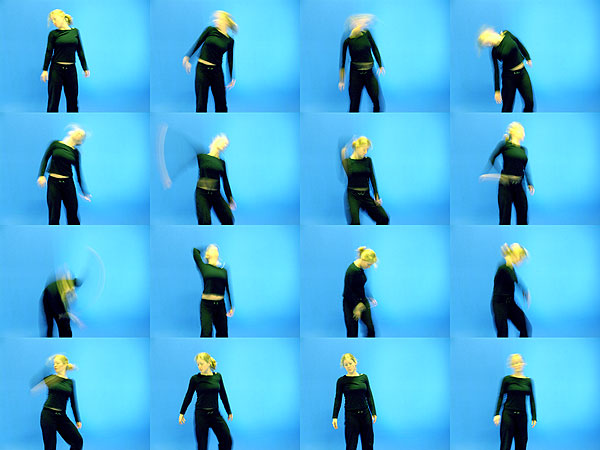

- Basic understanding. We have documented that there are indeed many robust links between sound and body motion in music, both in its production and its perception. Experiences of close relationships between sound and body motion seem to apply equally well to novices and experts, i.e. also, people with little or no musical training seem to spontaneously associate body motions with musical sound, often tracing melodic contours and rhythmical patterns with their hands, head, torso and feet, as well as reflecting the overall affective features of the music (e.g. calm, agitated, fast, slow, etc.) in their body motion. Our research in this area has enhanced our knowledge of music-related actions and our general understanding of music as a multimodal phenomenon.

- Methods and technologies. Music-related action is a relatively new area of research, and our project has been at the cutting edge internationally in developing methods (experiment and observation studies designs) and technologies for capturing and processing motion and sound data (various so-called motion capture technologies and associated schemes for formatting, representing, visualising, classifying and interpreting such data). This methodological work has also included studying combinations of sound and motion data in musical applications such as in the interfaces for new digital musical instruments and interactive sonification applications (i.e., using the sound output to represent various data). Our research project has thus (and by the stated aims of the project) resulted in both primary, theoretical insights with relevance for various musical, HCI, every day, as well as therapeutic contexts, and in the development of tools for further research and practical uses in this highly interdisciplinary area.

Main R&D activities

Our primary research and development activities have been concerned with the following:

- Enhancing our understanding of motion-sound couplings in the production and perception of sound and music.

- Developing means for motion capture by adapting and extending available technologies and developing new technologies.

- Developing means for processing and representing motion and sound data.

- We were developing means for exploiting innate and learned sound-action links in designing new musical instruments, HCI, and everyday contexts.

Research environment and cooperation

The project required expertise in musicology, music psychology, human movement science, and informatics, resulting in the following:

- Establish an interdisciplinary environment between the Departments of Informatics and Musicology (and towards the end of the project, as well as the Department of Psychology) at the University of Oslo and other national partners concerned with human movement.

- Establishment of research infrastructure with lab facilities for human movement research with the Music, Mind, Motion, Machines lab (the fourMs lab), thanks to additional funding from the RCN and the University of Oslo. This also necessitated much work with evaluating, procuring, installing, and adapting existing technologies and developing our extensions to current technologies, cf. the above-mentioned method development work.

- We are putting much effort into our international cooperation, as we were on the cutting edge of both theories and methods. In particular, we have had an extensive and fruitful international collaboration concerning technologies with software and general know-how exchanges. This included participating in the EU COST Action SID (Sonic Interaction Design), the international NIME (New Interfaces for Musical Expression) community, also arranging the large NIME conference in Oslo in 2011, and participating in the still ongoing EU project EPICS (Engineering Proprioception in Computing Systems). Additionally, we have had a bilateral agreement with McGill University in Montreal, and several more informal cooperation schemes with various European partners, entailing several research exchanges and workshops.

- The establishment of cooperation within Norway on human movement research (with NTNU and NIH) also resulted in our developed software being used in various medical diagnostic contexts.

- Additionally, our research effort and our international and national cooperation have in the course of the project resulted in our strongly interdisciplinary fourMs Centre of Excellence proposal to the RCN, a proposal that received very high scores from the international expert panel also in the final round of the competition, but which unfortunately was not selected as one of the new Centers of Excellence in November 2012. However, the work put into, and the favorable assessments received for this CoE scheme should be an impetus for similar research proposals in the future.

Dissemination and expected use of results

Throughout the project, we have had a relatively high level of dissemination activity,

both to our peers and the general public:

- We have had a relatively large number of public lectures/demos and concerts and a fair amount of press coverage. We have also worked closely with various musicians and other performance artists as a means of research dissemination and testing ground for our findings and technologies.

- As seen from the publications lists, we have presented our work to our peers at a relatively large number of international conferences and in international publications. Publications have also resulted in two awarded Ph.D. theses.

- New hardware/software applications result in better motion capture, processing, and mapping used worldwide in research and artistic environments. (see fourMs downloads).

- Elements from our research have been integrated into several bachelor's and master's courses and master's theses at the University of Oslo and in the annual EU-funded International Summer School in Systematic Musicology (ISSSM) for masters and Ph.D. students.

Cooperation and Financing

Sensing Music-related Actions is a joint research project of the departments of Musicology and Informatics, and has received external funding through the VERDIKT program of The Research Council of Norway. The project ran from July 2008 until December 2012.